Picture a scene that plays out every year in thousands of organizations. An employee opens the mandatory security awareness module. They read that phishing is dangerous, that you should check the sender, that you never share passwords. They move to the next slide. A three-minute video plays. Next slide. A quiz with four options, three obviously wrong. They pick the right answer in twenty seconds, get the certificate, and go back to work.

The following Monday, at nine in the morning, they get an email that reads “overdue invoice: immediate action required”. They click. It’s not that they didn’t know what phishing is. They had just reviewed it on Friday. It’s that they had never practiced the decision not to click in a real context.

This is the quiet gap in traditional security awareness. A program can meet the hours, pass the audit, and still leave employee behavior exactly where it was. Closing that gap requires a change that isn’t in the content itself, but in how it’s presented. That’s where interactive slides come in.

Why traditional cybersecurity e-learning doesn’t change behavior

Daniel Kahneman described two systems of thinking: a fast, automatic, emotional System 1, and a slow, deliberate, analytical System 2. Critical cybersecurity decisions happen almost always in System 1. When the urgent email arrives, when the phone rings with a familiar voice, when the red button asks for immediate action, the brain isn’t calmly analyzing signals. It’s reacting.

The problem is that classic e-learning trains System 2. Reading, video, multiple-choice quiz. All that content gets processed in the slow mode of the brain: the one that steps in when there’s time to think. And on Monday morning, facing a suspicious email, that isn’t the mode that decides.

The familiar outcome: programs inform about risks, but automatic responses don’t change. The regulatory requirement is met; actual risk isn’t reduced.

Learning by doing, not by reading

The difference between informing and training is the same as between reading a sailing manual and stepping onto a sailboat. Someone can know the theory of knots by heart and, when the rope is in their hands with a crosswind, not know where to start. The brain learns to decide in the context where it will have to decide.

This is what a well-designed awareness module aims for: to reproduce the scenario, not describe it. The employee doesn’t read what a fraudulent email is: they look at it, analyze it, mark the signals. They don’t listen to what to do when facing a ransomware incident: they see the red screen, feel the pressure of the timer, decide the first step.

When practice happens in context, training gets stored in the system that will later react. It’s the same principle any serious discipline applies: pilots train in simulators, not in textbooks. Cybersecurity awareness deserves the same level of instructional rigor.

Variety that sustains attention

The other enemy of traditional e-learning is predictability. When the third slide looks like the second, and the fourth looks like the third, the brain goes on autopilot: it reads without reading, clicks without looking, waits for the next screen. Learning shuts down long before the final quiz.

Breaking that inertia requires real variety of formats. Not changing the background color. Changing the type of mental activity demanded from the user. One slide has the employee identifying signals in an email. The next asks them to classify information as confidential or public. The one after that drops them into a virtual office scene to spot physical security risks. Each screen activates a different cognitive process.

Curiosity holds up because the user doesn’t know what’s coming next. And that curiosity, as I’ve explored in other articles on game-based learning and gamification applied to awareness, is exactly the mental state in which the brain locks in new learning. No curiosity, no long-term memory.

Feedback that supports, instead of judging

There’s a decision about tone in interactive modules that looks small and isn’t: how the user is addressed when they make a mistake.

The instinctive design reaction is to mark the error with red, an exclamation point, and a corrective sentence. “Error. You must always report phishing attempts.” It feels firm and instructive, but it produces the opposite effect: it raises the anxiety of learning and lowers the willingness to try. The user learns that being wrong hurts, not that being wrong teaches.

The alternative is to acknowledge first what was done right. If someone hung up a suspicious call but didn’t report it, useful feedback isn’t “you forgot”. It’s: “you hung up the call, that was right. The step missing is to report it to the IT team”. The message is the same; the emotional trace is different. In one, the mistake closes the door. In the other, it leaves it open.

This principle, supporting instead of judging, sits at the heart of educational moments and nudges, the short interventions that shape behavior without confronting it. Security awareness isn’t an exam. It’s a process of building culture, and culture isn’t built with reprimands.

Three training moments, told as experience

The best way to show how interactive e-learning changes things is to describe what the employee actually experiences in front of the screen. We picked three scenes from SMARTFENSE’s new library of interactive slides, designed with more than forty resources and twenty-six different types of interaction.

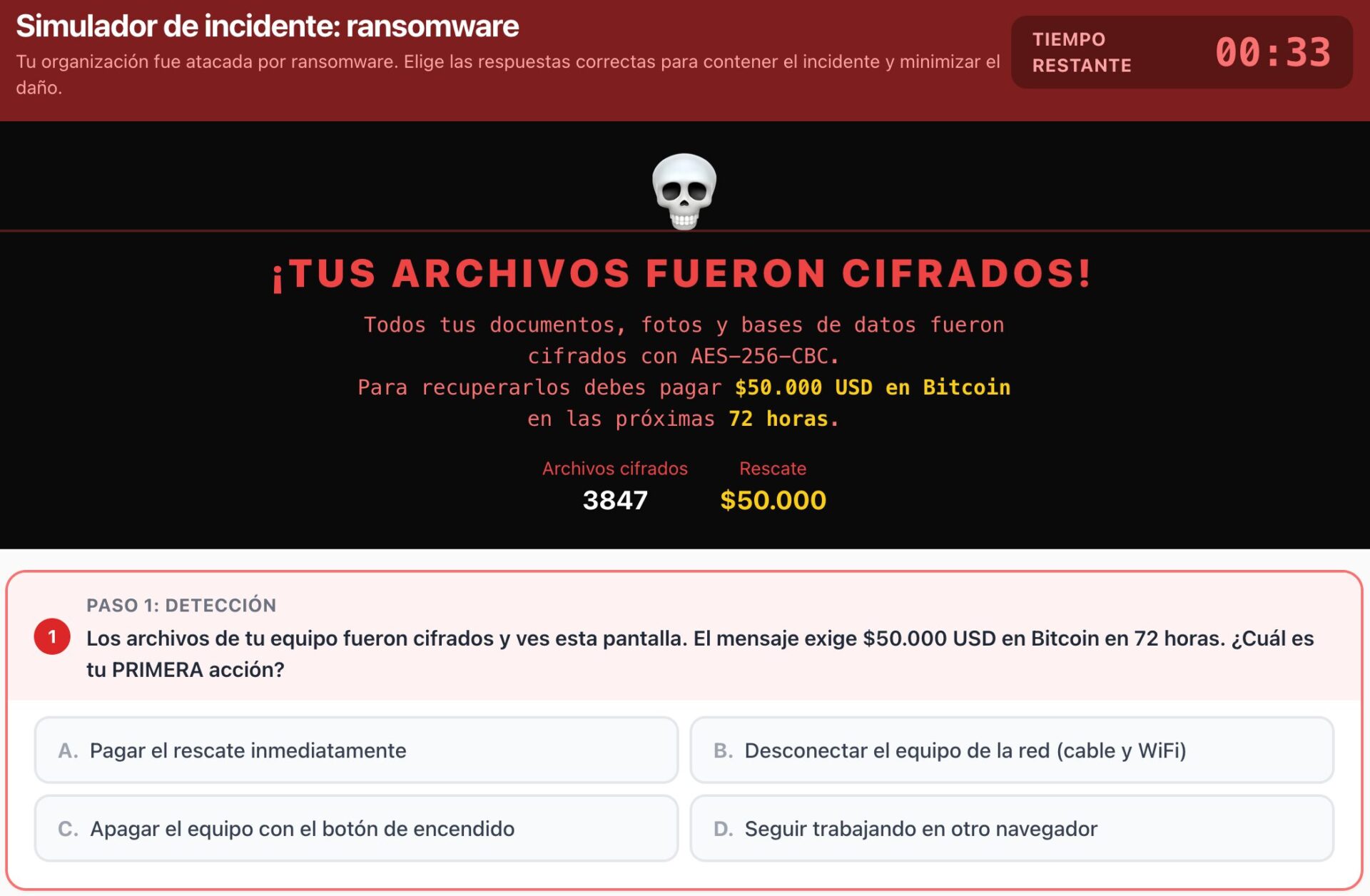

Ransomware incident simulator. A black screen appears with a red message: “Your files have been encrypted!”. A countdown clock starts at thirty-three seconds. Below, a simple question: what is your FIRST action?. Four options, some plausible, one correct. The employee has to decide under the same time pressure they would feel in the real moment of the attack. The correct answer isn’t learned in the abstract. It’s trained where it will be needed.

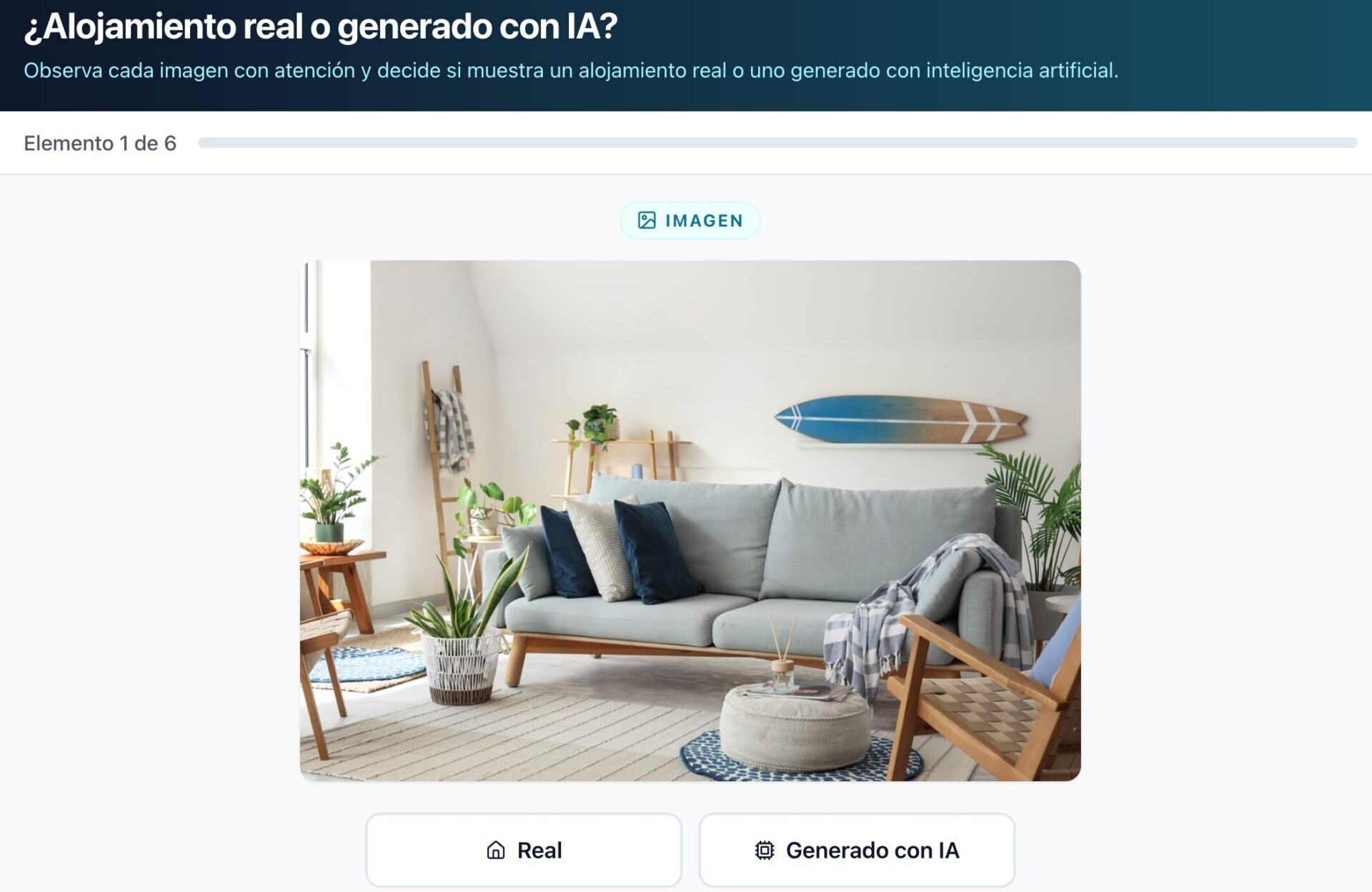

Real listing or AI-generated? A photo appears of a bright living room with a gray sofa, a surfboard on the wall, and a couple of plants. Two buttons below: real or AI-generated. Then another image. Then audio. Then video. The employee trains their eye and ear for a threat that didn’t exist three years ago: fraud with synthetic content, now used in everything from rental scams to voice-cloning impersonation of family members.

Social engineering chat. A messaging interface opens. The attacker starts friendly, almost innocent. One message, two messages, five messages. The employee replies line by line and sees how an ordinary conversation escalates into a specific request for confidential information. What a bullet point would describe as “attackers manipulate trust over time” becomes a concrete experience: trust is in fact built in small steps. And the learning settles in a different part of the brain.

What changes when security awareness stops being a checkbox

An awareness program stops being a checkbox when employees can tell you what they learned by Monday morning, not how many hours they racked up by the end of the year. To get to that outcome, content alone isn’t enough. You need a way of presenting it that activates System 1, sustains attention, and turns every mistake into an open door.

For the organization, the shift also shows up in the reporting. When every interactive slide records granular performance (which phishing signals were missed, where the social engineering conversation was cut off, how often the user confused AI-generated content with real content), the security lead has usable information, not just “module completed”. They go from knowing that people took the course to knowing what they didn’t learn and where to intervene. And that difference, as we discussed in measuring what matters in an awareness program, is what separates programs that look good at audit from programs that actually reduce risk.

The invitation is simple: experience the new library of interactive slides inside SMARTFENSE firsthand and see what changes when e-learning stops asking for attention and starts asking for decisions.

Leave a Reply